Thank you for your interest in the GO Tutor Corps! If you have questions about our program, GO partners, or about GO Tutor Corps itself, please use the form below to get in touch.

Finding Evidence of GO’s Impact: Math Tutoring Delivers Measurable Student Gains

May 2026

Jonah Liebert

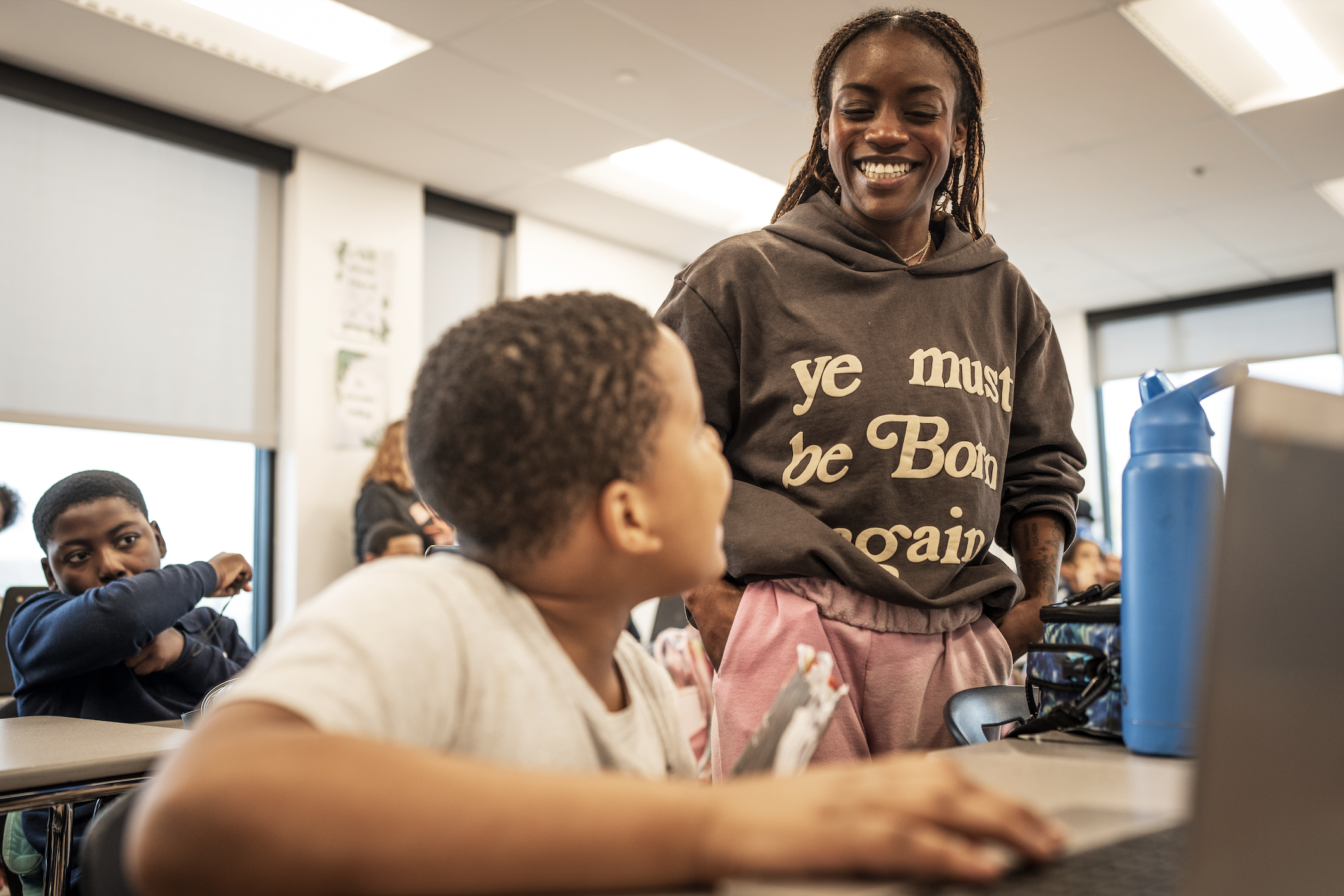

The GO Tutor Corps (GO) has been a pioneer in providing high-impact tutoring for over a decade—well before high-impact tutoring became a widely recognized strategy in education. For most of that time, our model operated on a whole-cohort basis: every student in a given grade received tutoring as part of a comprehensive school-wide program. While this approach ensured broad access and deep integration, it also presented a longstanding challenge for evaluation: without a natural comparison group, it was difficult to isolate the program’s causal impact through rigorous methods.

In recent years, as GO expanded its partnerships, some school partners began implementing the program as a targeted intervention rather than a universal service. This shift created the ideal conditions for empirical testing—allowing direct, within-school comparisons between tutored and non-tutored students in the same grades. The study of network-wide 2024-25 math achievement data represents a milestone: the first robust evaluation of our long-established model under these new conditions.

Conducted by the GO Research Team, the evaluation examined fall-to-spring achievement gains among 11,816 students in grades K–12 across our network of 28 study schools. Of these, 489 tutored students met the criteria for inclusion in the study: they were in a classroom with non-tutored students and had fall and spring test scores. To estimate the program’s impact, we used multiple advanced methods that helped us rule out possible explanations of the gains we found among tutored students. The advanced matching methods help us to get closer to what researchers refer to as an apples-to-apples comparison, giving us greater confidence that we found a real impact of our tutoring program.

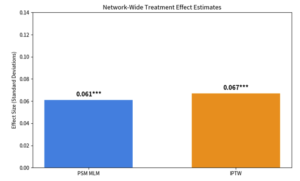

Network-Wide Impact

Across the full network, tutored students demonstrated a statistically significant gain of 0.061 standard deviations over matched non-tutored peers. An additional analysis, which retained all 489 treated students, produced a very similar result of 0.067 standard deviations. In education research, these effect sizes are statistically and educationally meaningful, translating to weeks of additional learning progress—and in strong implementation settings, several months.

Figure 1. Network-wide treatment effect estimates from propensity score matching (PSM) and inverse probability of treatment weighting (IPTW). Note: * p < 0.05, ** p < 0.01, *** p < 0.001

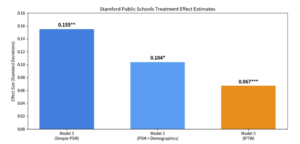

Stamford Public Schools Results (with Full Demographic Controls)

Effects were strongest and most consistent in Stamford Public Schools, where the program had high-fidelity implementation and complete demographic data. With complete data, we were able to match tutored and non-tutored students on more factors, leading to stronger confidence in the robust tutoring impact we found. Three different models were tested, progressively adding more controls:

- Model 1 (core variables only): 0.155 SD

- Model 2 (PSM with full demographics): 0.104 SD

- Model 3 (IPTW with full demographics): 0.067 SD

All three models showed positive effects, confirming robustness.

Figure 2. Stamford-specific treatment effect estimates across three increasingly rigorous models. Note: * p < 0.05, ** p < 0.01, *** p < 0.001

School-level variation highlights the critical role of implementation quality. The strongest outcomes occurred where the program benefited from consistent delivery and strong operational support.

The study’s methodological rigor strengthens confidence in these findings. We conducted extensive sensitivity analyses, including different matching calipers and alternative specifications. Post-matching covariate balance was excellent, and diagnostics confirmed sufficient overlap in propensity scores and stable weights. In fact, the research design was strong enough to meet the rigorous selection criteria of John Hopkins University’s Center for Research and Reform in Education (CRRE) Evidence for ESSA.

For school and district leaders considering evidence-based partnerships to support mathematics achievement, these results offer actionable insights. After more than a decade of refining high-impact tutoring, GO now brings both deep practical experience and rigorous empirical validation to the table. The analysis demonstrates that our model can produce accelerated gains when implemented with consistency and fidelity.

With strong evidence now in hand, high-impact tutoring represents a proven pathway to help more students not only recover lost ground but truly thrive in mathematics.